Joshua Martin

Degreed meteorologist with over 15 years of professional software development experience in architecting and building database, desktop, embedded, cloud, mobile and web applications. I am experienced in the full development life-cycle process, object-oriented and functional programming, research and development, am a good communicator and can work well on multiple projects.

Experience

Research Associate

Cooperative Institute for Severe and High-Impact Weather Research and Operations (CIWRO)

As a Research Associate for the Cooperative Institute for Severe and High-Impact Weather Research and Operations (CIWRO) and the National Severe Storms Lab (NSSL), I led the development effort in transitioning Warn-on-Forecast (WoFS) from an on-premise, hardware-specific application to a scalable, platform-agnostic cloud application (Cb-WoFS). WoFS is a high-resolution numerical weather prediction (NWP) model consisting of 36 individual members that assimilate conventional and remote sensing observations, radar reflectivity, velocity, and satellite data every 15 minutes and generate forecasts every half hour. Forecasts are post-processed in near-real time and graphics are generated for forecasters to review during a severe or high-impact weather event. Transitioning WoFS into a cloud application required a high familiarity with WRF-ARW, Fortran and GSI. The complete solution required the development of a C# .net 5 web app that was integrated with Azure Batch for managing HPC resources and the WoFS workflow, containerization of WoFS (including WRF, GSI and other tools), continuous integration and continuous development pipelines (for automatic builds and publishing), terraform scripts for cloud resource build up / tear down, containerization of post-processing python apps, and integration with the following Azure services: Batch, Storage, Queue, CosmosDB, Docker Container Registry, App Service / Functions, CDN and Azure Active Directory.

Senior Software Developer Consultant

My role as a Senior Software Developer Consultant in KiZAN’s Software Development group focused on creating software for clients in many different industries. Recent projects at KiZAN have included ASP.NET MVC web applications implementing a Service Oriented Architecture via WCF services, as well as NHibernate, AutoMapper and StructureMap for dependency injection. A recent project for a mid-size manufacturer in Indiana involved developing an optimization algorithm in F#, with an ASP.NET MVC web front-end and a SQL database for saved optimizations. In another project, I developed a mobile application for a nationwide field service company that was deployed to the iOS and Android app stores. The application was developed in Telerik’s NativeScript which allowed for a common codebase, but also allowed for custom platform-specific module development.

Student Research Assistant

The CLOUDMAP project is focused on the development and integration of unmanned aircraft systems with sensors for atmospheric measurements. As a Research Assistant for the University of Oklahoma’s Center for Autonomous Sensing and Sampling (CASS), I have assisted in UAV flights, sensor testing and placement, as well as MATLAB script development. I also learned the open-source paparazzi project and developed flight plans for CLOUDMAP’s Small Unmanned Observer (SUMO) UAV’s. In 2017, I architected a solution for sending sensor data to the cloud, which could then be processed and live streamed to the CLOUDMAP web application and any other subscriber interested in live atmospheric data. The solution involved custom development of the open source Ardupilot platform (C++), custom MAVLink messages and multiple Azure Cloud services such as the Event Hub, Functions, Storage (NoSQL) and the Service Bus.

Co-Owner / Lead Developer

SevereStreaming is the backbone of SevereStudios.com, a site where verified storm chaser’s can stream live video to the web. As the Lead Developer of SevereStreaming, I redesigned the streaming platform and moved all components to the cloud. The solution required building a web API for clients and partners to quickly download chaser’s live stream information, as well as multiple virtual machines for handling server load and automatic edge server creation, load balancer configuration and chaser’s video thumbnails. The solution involved ASP.NET Web API / C#, PHP, PostgreSQL, Amazon S3, AWS Load Balancer and Debian based virtual machines hosted in EC2.

Intern

During the Fall 2017 semester, I worked as an intern with the Storm Prediction Center to develop a Python web application for forecast verification. The web application analyzed and compared forecast areas with actual storm reports using popular GIS Python packages. The results were then saved to a PostgreSQL database and plotted with HTML5 based graphing libraries.

Software Developer

As a developer for the Sales Compensation team, my role consisted of building a new solution for Heartland's extensive payroll process. The solution utilized Microsoft SQL Server 2008 and Entity Framework 5; ASP.NET MVC 4, Web API for REST and WCF for SOAP based communication with other Heartland services; HTML 5 and an extensive use of modular, AMD compliant JavaScript for client side features and communication with Web API.

Software Developer

Working for the leader in healthcare subrogation services, I was part of a large development team building internal tools for our auditors using WCF Services and Silverlight. The software we created was used by our analysts to help visualize and mine terabytes of data using the latest technologies, such as the Entity Framework, Microsoft SQL Server 2008 and the .NET Framework 4.0.

Software Developer

In early 2005 I was hired as a Software Developer for one of the largest, most influential churches in the country with a membership base of over 28,000 and a staff of 350 employees. I was given the responsibility of creating numerous ASP.NET, Windows Forms and Windows Services projects with C# and VB.NET, implementing Microsoft SQL Server 2000 – 2008 for most database solutions.

Software Developer

Provide custom software development to various clients across industries. My solutions include web, database or client development to serve the need of the client. In a recent project for a mid-size company in downtown Oklahoma City, I developed a solution for the client to control their outdoor sign display from a web portal. The project involved an Arduino with network access that communicated to a small ASP.NET Core web app running on CentOS. The Arduino would execute various light displays in response to the configuration created in the web app.

Education

Transcripts available upon request.

Areas of Expertise

- Python

- PostgreSQL

- C++

- C#

- ASP.NET MVC

- .NET Core

- Amazon AWS

- Debian

- Entity Framework

- F#

- Git

- HTML

- CSS

- SASS

- JavaScript

- jQuery

- MATLAB

- Azure

- .NET Framework

- IIS

- SQL Server 2000+

- VB.NET

- ADO.NET

- PHP

- NativeScript

- TFS

- Unit Testing / TDD

- Universal Windows Platform

- WCF

- WPF

- Workflow

- Web Services / REST

- Windows Services

- XML

- XSL

- Microsoft Certified Solutions Expert: Cloud Platform and Infrastructure

- Microsoft Certified Solutions Associate: Cloud Platform

- Microsoft Certified Solutions Associate: Web Applications

- Microsoft Certified Solutions Developer: App Builder

- Microsoft Certified Solutions Developer: Web Applications

- Microsoft Certified Solutions Developer: Windows Store Apps Using C#

- Microsoft Certified Solutions Developer: Windows Store Apps Using HTML5

- Microsoft Specialist: Programming in HTML5 with JavaScript and CSS3

- Microsoft Specialist: Programming in C#

- Microsoft Specialist: Developing Microsoft Azure Solutions

- Microsoft Specialist: Architecting Microsoft Azure Solutions

- Microsoft Specialist: Implementing Microsoft Azure Infrastructure Solutions

Transcripts available upon request.

Projects

Center for Autonomous Sensing and Sampling App

The goal of this project was to build a network of autonomous UAV's that could sample the atmosphere at various intervals, as well as remotely on-demand. Data would then be processed in the cloud and streamed live to interested parties, such as the local National Weather Service Forecasting Office. This project was the topic of an AMS presentation on Tuesday, January 9, 2018.

During the 2017 semester, I architected a solution for sending sensor data to the cloud, which could then be processed and streamed live to any registered subscriber (via AMQP). In addition, I developed the WxUAS portal, where any observer can watch in near-real-time as our UAV's ascend and descend the atmosphere. The WxUAS portal will plot pressure vs temperature, dew point and flight path. You can view previous sessions (such as 10/6/2018, for example), or if you logon at the right time, perhaps catch a live flight!

The solution involved custom development of the open source Ardupilot platform (C++), custom MAVLink messages and multiple Azure Cloud services such as the Event Hub, Functions, Storage (NoSQL) and the Service Bus. The WxUAS portal was built on .NET Core and uses Web Sockets for streaming live atmospheric sensor data to connected clients.

- C/C++

- Linux

- Debian

- F#

- TCP/UDP

- C#

- Azure

- .NET Core

- Web Sockets

- Google Maps

- JavaScript

- jQuery

- HTML5

- Type Providers

- WPF

- Azure DocumentDB

- Azure Event Hub

- Azure Service Bus

- Azure Functions

Point-Based Convective Outlook Verification App

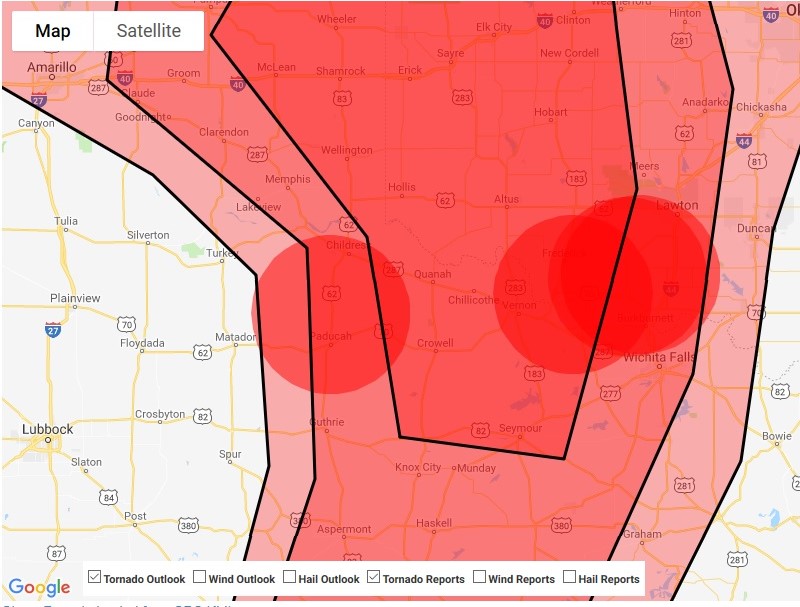

The Storm Prediction Center issues daily convective outlooks for the entire continental Unites States, breaking down severe weather risk into three categories: tornado, hail and wind risk. Probabilities (2%, 5%, 10%, 15%, 30% or 45%) of experiencing these severe weather events within 25 miles of any given point are attached to each forecast. These forecasts are then verified after all severe weather reports have been received in order to evaluate forecast accuracy.

The current forecast verification scheme plots the outlook on a latitude-longitude 80-km grid spacing, with each grid cell then being assigned it's respective outlook probability. This map is overlaid with verified storm reports, which are then assigned to their respective grid cell. This data is analyzed to determine the accuracy of the individual probabilistic outlooks. Unfortunately, this forecast verification scheme may not reveal the complete picture for a couple reasons:

- The outlook is specifically for areas "within 25 miles of a point", whereas the verification scheme uses a grid based approach.

- A storm report is assigned to a single grid cell (or single probabilistic region), but ideally, a storm report should be accounted for in as many probabilistic regions as it overlays (three, in the example above!)

During the Fall 2017 semester, I developed a Point-Based Convective Outlook Verification App for the SPC. This app analyzed the May/June 2017 outlook and report archive by overlaying the reports and probabilistic forecast regions, generating and saving forecast accuracy analytics to a PostgreSQL database. The statistics were generated by overlaying a 25-mi circle over each storm report and including that report for every probabilistic region overlaid.

The application gives the user the ability to browse historical verification results with interactive maps and graphs, re-analyze with user defined variables (such as the 25-mi radius, dates and outlook times), and generate accuracy reports over specific timeframes. The project was built on Python and various open-source tools.

- Python

- bottle

- sqlalchemy

- shapely

- pyproj

- shapely

- Google Maps

- JavaScript

- jQuery

- HTML5

- PostgreSQL

Live Streaming / Web API / Storm Chasers App

Severe Studios is a platform where verified storm chasers can live stream their chasing adventures to the web. Users can freely keep tabs on active chasers via the Live Storm Chasing page, virtually experiencing the severe weather from their desktop or mobile device.

I joined the Severe Streaming team in 2013, rebuilding the streaming platform from a Windows Media Service to a cloud-based service, capable of live streaming to all the major client platforms and adjusting to high demand during severe weather days. An API was developed for business partner usage, as well as a Windows Store App, complete with a chaser map and NWS issued products.

- C#

- .NET Core

- Web Sockets

- Amazon AWS

- Debian

- PostgreSQL

- Azure

- GIS

- JavaScript

- jQuery

- HTML5

- F#

- Azure VM

- PHP

- Bash

- UWP

Publications and Presentations

Coauthored

- Segales, A. R., Greene, B. R., Bell, T. M., Doyle, W., Martin, J. J., Pillar-Little, E. A., and Chilson, P. B., 2020: The CopterSonde: An Insight into the Development of a Smart UAS for Atmospheric Boundary Layer Research, Atmos. Meas. Tech. Discuss., https://doi.org/10.5194/amt-2019-421, 2020.

- Chilson, P. B., and Coauthors, 2019: Moving towards a Network of Autonomous UAS Atmospheric Profiling Stations for Observations in the Earth's Lower Atmosphere: The 3D Mesonet Concept. Sensors, 19, 12, 2720, https://doi.org/10.3390/s19122720.

- Segales, A. R., Chilson P. B., Martin J., Umeyama A., Greene B. R., Duthoit S., 2018: Harnessing the Power of the Ardupilot and Pixhawk for UAS Atmospheric Research: An Integrative Approach. 98th Annual Meeting, Austin, Texas, Amer. Meteor. Soc., https://ams.confex.com/ams/98Annual/webprogram/Paper334073.html

- Clark, A. J., I. L. Jirak, B. T. Gallo, K. H. Knopfmeier, B. Roberts, M. Krocak, J. Vancil, K. A. Hoogewind, N. A. Dahl, E. D. Loken, D. Jahn, D. Harrison, D. Imy, P. Burke, L. Wicker, P. S. Skinner, P. L. Heinselman, P. Marsh, K. A. Wilson, A. Dean, G. J. Creager, T. A. Jones, J. Gao, Y. Wang, M. Flora, C. K. Potvin, C. A. Kerr, N. Yussouf, J. Martin, J. Guerra, B. C. Matilla, T. J. Galarneau, 2022: The 2nd Real-Time, Virtual Spring Forecasting Experiment to Advance Severe Weather Prediction. Bulletin of the American Meteorological Society, 103, E1114–E1116, doi: 10.1175/BAMS-D-21-0239.1.